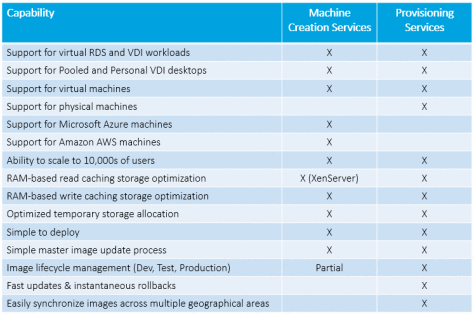

As I was discussing the storage optimization capabilities in the Machine Creation Services vs Provisioning Services debate, I mentioned the use of a XenServer RAM-based read cache. This can be misunderstood as XenServer IntelliCache (a mistake I’m sad to say I’ve made in the past).

XenServer IntelliCache (released with XenServer 5.6 SP1) and XenServer RAM Cache (released with XenServer 6.5) are two different capabilities of XenServer, both of which tries to reduce the IO impact on shared storage.

Let’s walk through different deployment scenarios with Machine Creation Services in XenApp and XenDesktop 7.9.

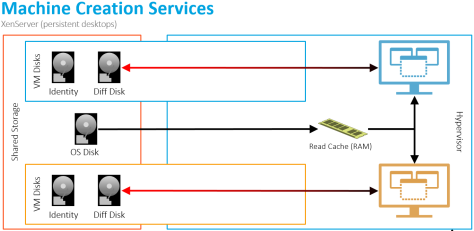

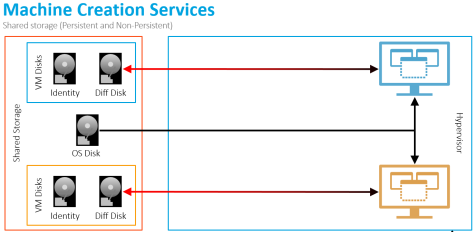

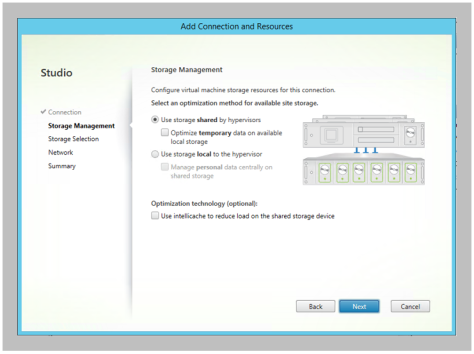

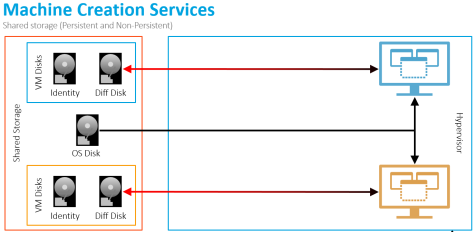

Scenario 1: Shared Storage on any Hypervisor

When defining a host connection, the default storage management option is to use shared storage.

This configuration results in the following architecture

This configuration results in the following architecture

The virtual machines read (denoted by the black lines) from the master OS disk on shared storage. Writes (denoted with the red lines) from the virtual machine are captured in the differencing disk, located on the same shared storage as the master OS disk.

It is also important to note that there will also be reads coming from the virtual machine’s differencing disk back to the VM.

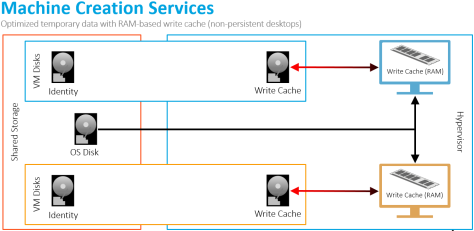

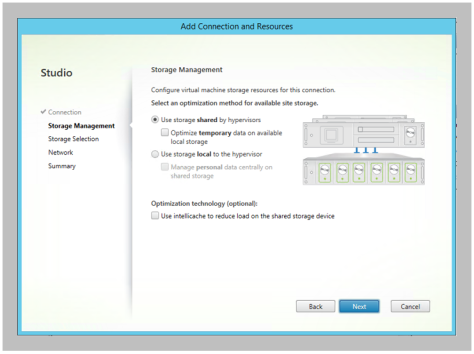

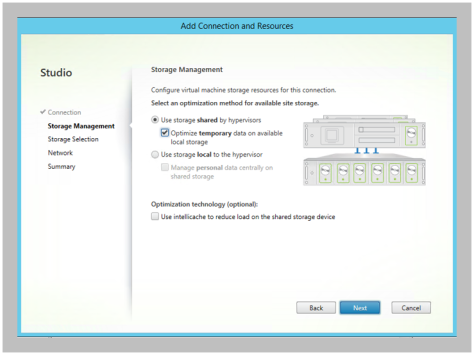

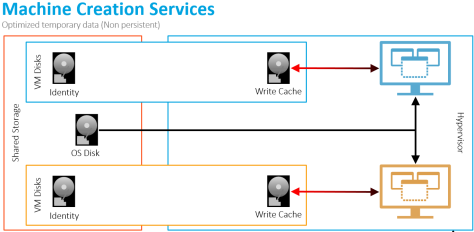

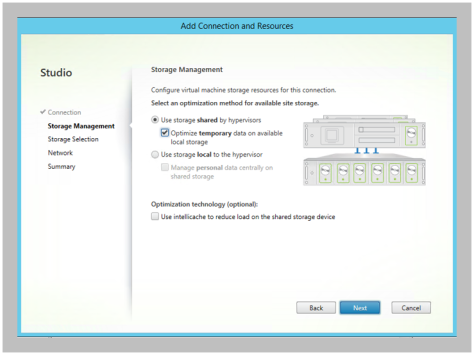

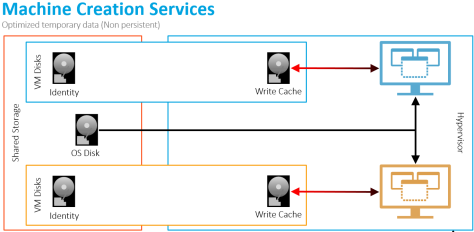

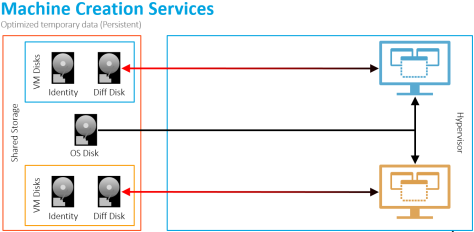

Scenario 2: Shared Storage with Optimized Temp Data on any Hypervisor

With XenApp and XenDesktop 7.9, admins, when creating their host connection configuration, can select the “Optimize temporary data on available local storage” option.

Selecting this option results in the following changes to the architecture:

Selecting this option results in the following changes to the architecture:

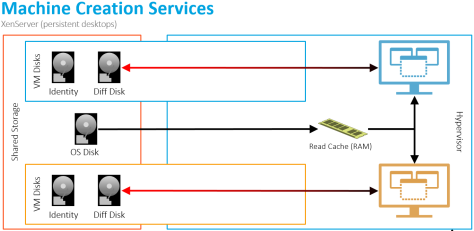

For non-persistent desktops, instead of the temporary writes going into the shared storage differencing disk, the writes are now captured within the write cache disk on the local hypervisor storage.

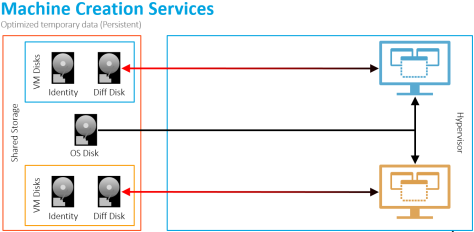

But for persistent desktops, the optimize temporary data setting is not used as all data is permanent. The writes are captured on shared storage within the differencing disk.

But for persistent desktops, the optimize temporary data setting is not used as all data is permanent. The writes are captured on shared storage within the differencing disk.

The value is that we don’t waste shared storage performance with data we don’t care about. We instead shift the storage IO to local, inexpensive disks.

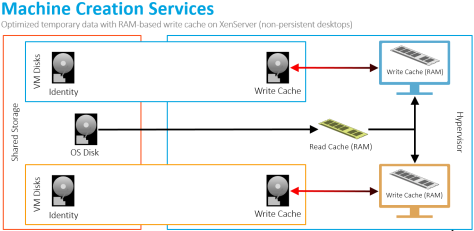

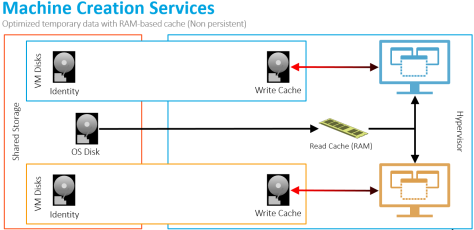

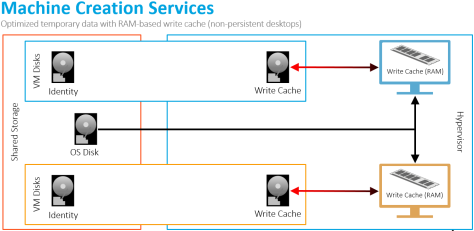

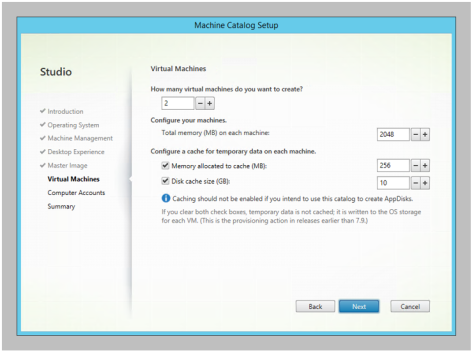

Scenario 3: Shared Storage with Optimized Temp Data and RAM Caching on any Hypervisor

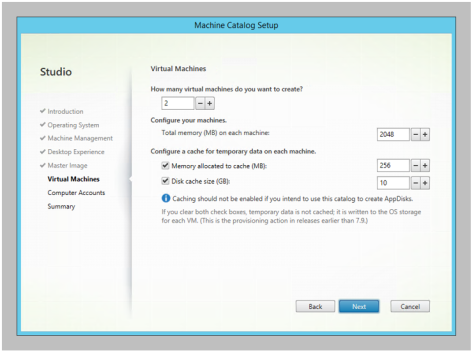

With XenApp and XenDesktop 7.9, a portion of the virtual machine’s RAM can be used to cache disk writes in order to reduce the impact on local/shared storage. During the creation of a new machine catalog, an admin defines the size of the RAM and disk cache.

The RAM cache operation adjusts the architecture as follows

The RAM cache operation adjusts the architecture as follows

For non-persistent desktops with a RAM-based write cache on a non-persistent desktop, the writes first go into the non-paged pool portion of RAM within the VM. As the RAM cache is consumed, the oldest data is written to the temporary disk-based write cache.

For non-persistent desktops with a RAM-based write cache on a non-persistent desktop, the writes first go into the non-paged pool portion of RAM within the VM. As the RAM cache is consumed, the oldest data is written to the temporary disk-based write cache.

However, this option is not applicable for persistent desktops due to the risk of data loss. If disk writes are cached in volatile RAM and the host fails, those writes will be lost, potentially resulting in lost data and corruption.

For non-persistent desktops, when used in combination with optimizing temporary data, we not only shift our write performance to low-cost local disks, but we also reduce the amount of write IO activity going to those disks. This should further help reduce the costs by not requiring the use of local SSDs.

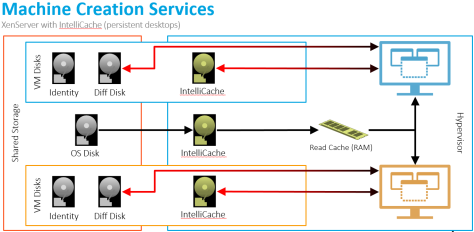

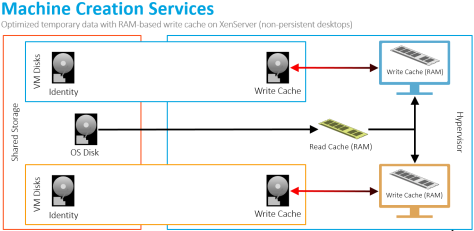

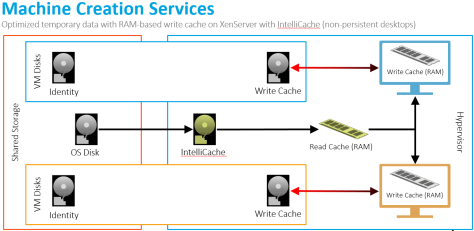

Scenario 4: Shared Storage with Optimized Temp Data and RAM Caching on Citrix XenServer

If the environment is deployed on Citrix XenServer, the architecture automatically includes a RAM-based read cache on each host.

For both, non-persistent and persistent desktops, portions of the master OS image is cached within the XenServer’s Dom0 RAM so subsequent requests are retrieved from the local RAM instead of generating read IOPS on shared storage.

For both, non-persistent and persistent desktops, portions of the master OS image is cached within the XenServer’s Dom0 RAM so subsequent requests are retrieved from the local RAM instead of generating read IOPS on shared storage.

This is valuable because we significantly reduce the master image reads from our shared storage. If you have 50 XenServer hosts, with each running 100 Windows 10 virtual machines, each virtual machine will read the same data from the same master image. This will add significant amounts of read IO activity on shared storage. By caching the reads in local RAM for each XenServer host, we can drastically reduce our impact on shared storage.

We also have a RAM-based read cache in Provisioning Services. This capability increased boot performance by 4X. I would expect to see similar results with this XenServer feature.

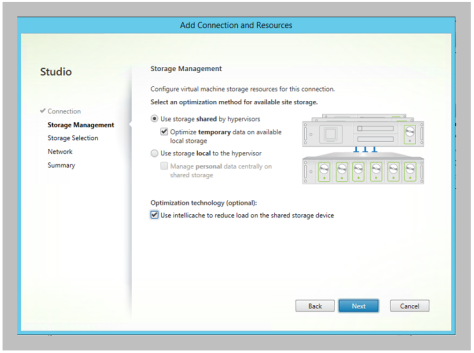

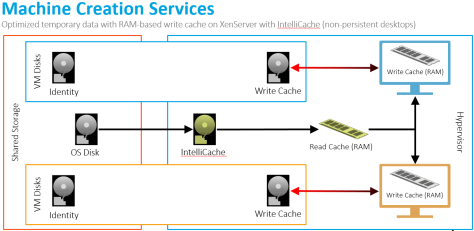

Scenario 5: Shared Storage with Optimized Temp Data and RAM Caching on Citrix XenServer with XenServer IntelliCache

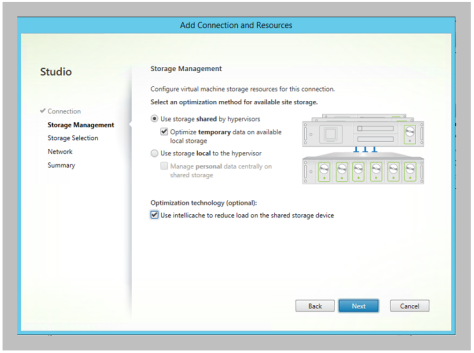

When the admin defines the host connection properties, Studio includes the IntelliCache option if the host connection is XenServer.

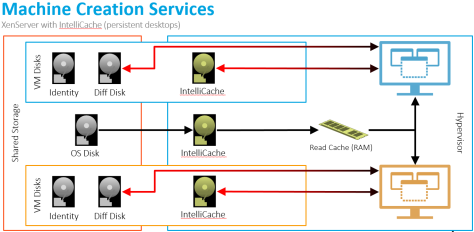

For non-persistent and persistent desktops, a local, disk-based cache of the master OS image is captured on each XenServer host, reducing read IOPS from shared storage. As items are accessed, they are placed within XenServer’s RAM-based cache.

For non-persistent and persistent desktops, a local, disk-based cache of the master OS image is captured on each XenServer host, reducing read IOPS from shared storage. As items are accessed, they are placed within XenServer’s RAM-based cache.

The write operations are different based on whether the desktop is non-persistent or persistent.

For non-persistent, disk writes are first captured in the VM’s RAM cache. When the RAM cache is consumed, the oldest content is written to the local write cache.

For non-persistent, disk writes are first captured in the VM’s RAM cache. When the RAM cache is consumed, the oldest content is written to the local write cache.

For persistent desktops, disk writes are simultaneously captured in the local IntelliCache disk (.VHDCache file in /var/run/sr-mount) and in the shared storage differencing disk. When the VM reads data from disk, it first checks the local IntelliCache disk and then the shared storage differencing disk.

For persistent desktops, disk writes are simultaneously captured in the local IntelliCache disk (.VHDCache file in /var/run/sr-mount) and in the shared storage differencing disk. When the VM reads data from disk, it first checks the local IntelliCache disk and then the shared storage differencing disk.

The value for this configuration is two-fold:

- Host-based IntelliCache Disk: Using IntelliCache with the Read Cache (RAM) provides us with two levels of caching on XenServer. This could help reduce reads from shared storage in situations where our Read Cache (RAM) is not large enough. Imagine if we have multiple images being delivered to each XenServer host. Our read cache (RAM) will not be large enough, resulting in increase read IO activity on shared storage. By combining the two, we should be able to keep shared storage Read IO activity to a minimum.

- VM-Based IntelliCache Disk: For persistent desktops, even though each write is performed twice (local IntelliCache disk and differencing disk on shared storage), the reads will come from the local IntelliCache disk, thus helping to reduce the load to shared storage. How much will this help the user experience and cost? That is still to be determined.

Daniel (Follow on Twitter @djfeller)

XenApp Advanced Concept Guide

XenApp Best Practices

XenApp Videos

Did I miss any criteria? Let me know

Did I miss any criteria? Let me know

The RAM cache operation adjusts the architecture as follows

The RAM cache operation adjusts the architecture as follows